Perceptron Convergence. The Perceptron was arguably the first algorithm with a strong formal guarantee. If a data set is linearly separable, the Perceptron will find a separating hyperplane in a finite number of updates. (If the data is not linearly separable, it will loop forever.)

What is perceptron explain with an example?

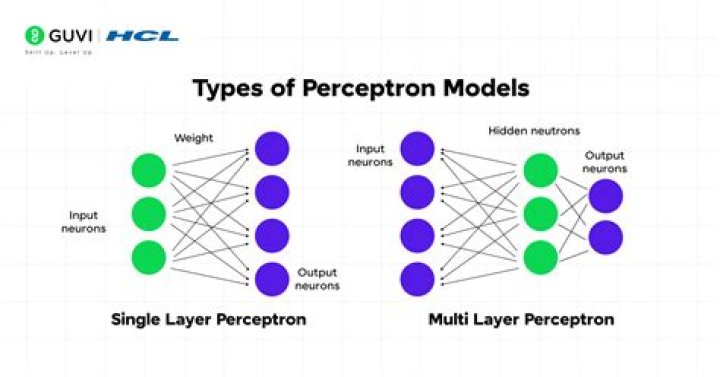

In the context of neural networks, a perceptron is an artificial neuron using the Heaviside step function as the activation function. The perceptron algorithm is also termed the single-layer perceptron, to distinguish it from a multilayer perceptron, which is a misnomer for a more complicated neural network.

How does perceptron algorithm work?

A Perceptron is a neural network unit that does certain computations to detect features or business intelligence in the input data. It is a function that maps its input “x,” which is multiplied by the learned weight coefficient, and generates an output value ”f(x).

What is perceptron training algorithm?

The Perceptron algorithm is a two-class (binary) classification machine learning algorithm. It is a type of neural network model, perhaps the simplest type of neural network model. It consists of a single node or neuron that takes a row of data as input and predicts a class label.

Why does the perceptron algorithm converge?

If your data is separable by a hyperplane, then the perceptron will always converge. It will never converge if the data is not linearly separable.

What does the perceptron convergence theorem suggest?

Perceptron Convergence theorem states that a classifier for two linearly separable classes of patterns is always trainable in a finite number of training steps. In summary, the training of a single discrete perceptron two class classifier requires a change of weights if and only if a misclassification occurs.

What are stages in perceptron model?

A perceptron consists of four parts: input values, weights and a bias, a weighted sum, and activation function.

Does perceptron always converge?

Yes, the perceptron learning algorithm is a linear classifier. If your data is separable by a hyperplane, then the perceptron will always converge. It will never converge if the data is not linearly separable.

What is PLA in machine learning?

The perceptron learning algorithm (PLA) (without loss of generalization one can begin with a vector of zeros). It then assesses how good of a guess that is by comparing the predicted labels with the actual, correct labels (remember that those are available for the training test, since we are doing supervised learning).

How do you know if a perceptron will converge?

On what factor the number of outputs depends?

On what factor the number of outputs depends? Explanation: Number of outputs depends on number of classes.

What is Adaline in soft computing?

ADALINE (Adaptive Linear Neuron or later Adaptive Linear Element) is an early single-layer artificial neural network and the name of the physical device that implemented this network. It is based on the McCulloch–Pitts neuron. It consists of a weight, a bias and a summation function.